Social Cognition & Mind Perception in HRI

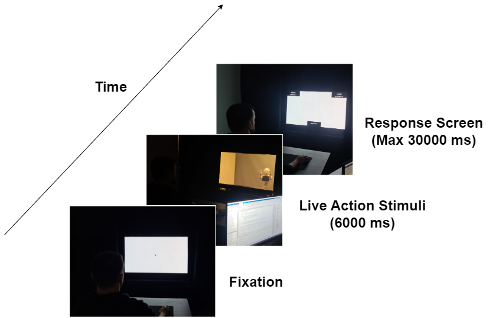

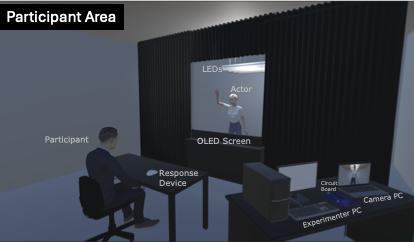

My doctoral research explored the dynamics of mind perception, specifically how humans ascribe intentions and feelings to robots. To uncover the determinants of this process, I investigated the effects of agent type, action type, as well as generational and individual differences using a novel mixed-methods approach that combines real-time implicit metrics with explicit measures.Read more →