Human Perception of Robot Actions

Overview

'Do we process robot actions in the same way we process human actions?' Driven by this question, my research systematically compares human and robot action perception.

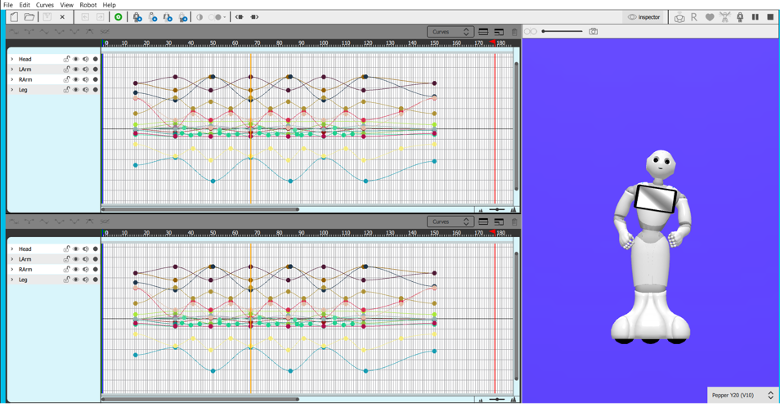

During my Ph.D., my team and I designed 40 communicative and noncommunicative actions for the Pepper robot and recorded the robot and a human actor performing the exact same movements. We tested these stimuli with nearly 450 participants and established the HR-ACT (Human-Robot Action) Database, a standardized resource that we believe will serve as a validated and comprehensive source for future HRI experiments.

Building on this work, my postdoctoral research investigated the multisensory nature of action understanding. We systematically explored how visual and auditory cues contribute to the identification and classification of actions. Additionally, in a parallel line of research, we examined the attentional impact of robotic agents, specifically investigating the distraction caused by robots versus humans under varying perceptual loads.

Understanding these underlying mechanisms is essential for developing robots that communicate effectively through movement. By providing empirical evidence on how humans perceive robotic actions, this research offers insights on designing more natural behaviors that align with human expectations, ultimately enabling safer and more efficient human-robot collaboration.

Key Research Questions

- What are the cognitive mechanisms underlying action perception in HRI?

- How do people distinguish communicative from noncommunicative robot actions?

- What movement features make robot actions more interpretable to humans?

- How do contextual cues shape the interpretation of robot behaviors?

Related Publications

T. N. Pekçetin, G. Aşkın, Ş. Evsen, T. D. Karaduman, B. Barinal, J. Tunç, C. Acartürk, B. A. Urgen

Behavior Research Methods, 2026

Distraction by a Human or a Robot: Effects of Perceptual Load and Action Type

A. B. Özsu, T. N. Pekçetin, D. Ş. Faydalı, B. A. Urgen

Companion of the 2025 ACM/IEEE International Conference on Human-Robot Interaction, 2025

When Actions Meet Context: Exploring Visual and Auditory Cues in Human-Robot Action Perception

T. N. Pekçetin, K. Kıylıoğlu, M. N. Robinson, H. U. Uçar, Burcu A. Urgen

11th International Symposium on Brain and Cognitive Science, 2025

T. N. Pekçetin, G. Aşkın, Ş. Evsen, T. D. Karaduman, A. Eroğlu, B. Barinal, J. Tunç, C. Acartürk, B. A. Urgen

Proceedings of the Annual Meeting of the Cognitive Science Society 45, 2023